Hadoop-based solutions revolutionize the way big data is conceived, stored, processed, and transformed into a competitive edge. The power of Hadoop is evident as influential Web 2.0 companies, such as Facebook, Google, and Yahoo!, deploy it for handling terabytes of poly-structured data sets.

Being an open-source framework, Hadoop unleashes the power of distributed processing to streamline big data. Apache Hadoop has emerged as a de-facto infrastructure for managing huge data lakes because of its powerful scalability and matchless cost-effectiveness. And here is how this infrastructure is evolving steadily to change the vast big-data landscape for the better.

Scalability Going Beyond Excellence

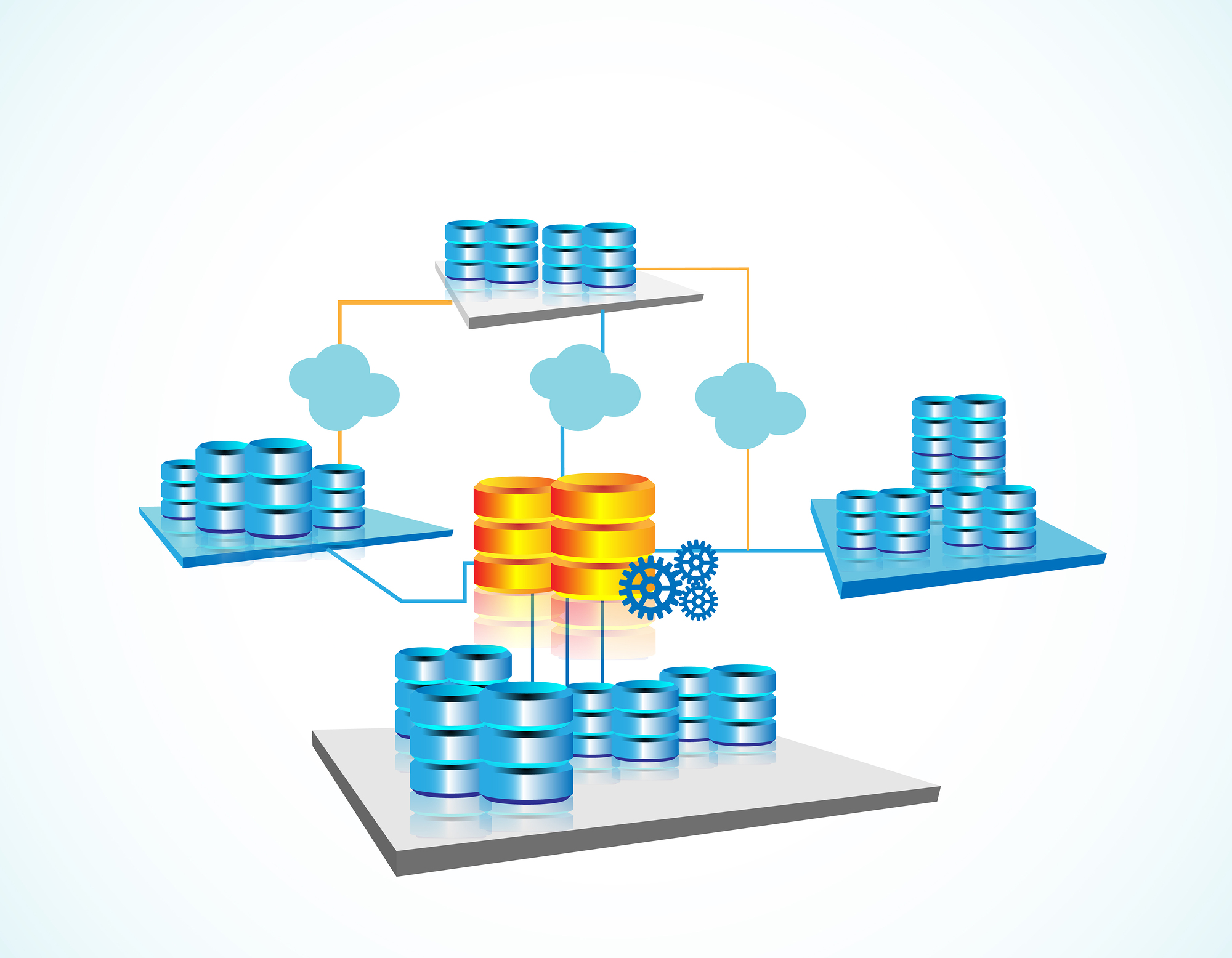

Hadoop has improved its scalability by capping the data’s throughput and restricting the data’s flow to a single server. Because of this, the framework distributes and stores vast amounts of data sets across thousands of inexpensive servers operating simultaneously. Unlike many traditional RBDMS’s that fail to scale huge data sets, Hadoop, on the other hand, empowers enterprises to execute applications on a number of nodes that involve hundreds of terabytes of unstructured data.

Every scalable Hadoop solution will always keep the data traffic to a minimum, and this will even not let the network face massive file bottlenecks. The framework’s distributed-processing capabilities allow it to handle large data clusters among a number of hardware commodities.

However, if Hadoop development services face a few scalability oversights, then the entire implementation lifecycle may have to face expensive changes. In short, this open-source framework reduces the overall quantity of nodes while maintaining the most demanding data-storage requirements.

A Cost-Effective Solution For The Future

Hadoop is rising as a cost-efficient alternative to a number of traditional extract, transform, and load (ETL) processes; these costly processes or modules extract data from a number of systems, converts it into a structure for streamlining analyses and reporting, and loads it on databases.

Businesses new to big data will see this concept overwhelming the conventional ETL processes. This is where Hadoop, a true cost-effective data management tool, comes in. This open-source framework can process massive data volumes easily and quickly; that is something even the most efficient RDBMS’s cannot do because they are cost prohibitive.

Hadoop is engineered as a completely scale-out architecture that can store a company’s raw data, which can be used later. The cost savings coming with the deployment of this framework are staggering. In an RDBMS, it costs nearly tens of thousands of dollars to process every single terabyte; Hadoop, instead, offers magnificent storage and computing capabilities that can cost businesses just a few hundred dollars per terabyte.

Speed Up The Performance

Hadoop accelerates data processing, which is ideal for environments that face a high influx of raw data. Businesses that are looking for thriving in data-intensive, large-scale atmosphere should opt for Hadoop because of its speed. This framework deploys unique storage method involving a distributed file system that maps every single data set with its location inside a cluster.

In Hadoop, the data-processing tools are nearly always located within the same server that carries the data. Because of this proximity, data processing is generally superfast even for large deployments. Apart from this, the framework even uses Hadoop Distributed File System and MapReduce programming models relying on a fully scalable storage mechanism.

So, if a business is dealing with the management of totally unstructured data sets, then it should leverage the power of Hadoop. That is because this is the only platform that can process data worth ten terabytes in a couple of minutes. Consider petabytes of data to be processed within hours if Hadoop is used.

Flexibility Improves Framework Credibility

With Hadoop, businesses can easily modify data systems as and when their needs and environments change. Hadoop’s flexibility allows it to link a number of commodity hardware including off-the-shelf systems. Because of its open source, everyone is free to change the way Hadoop does certain functions. This capability to modify the framework, in turn, has improved the framework’s flexibility.

Hadoop lets businesses access fresh data sources and discover different data sets (both structured and unstructured) that can be used for drawing fresh, valuable insights. So whether the data is coming from a company’s social-media channels or its email conversations, Hadoop can process it all with improved credibility and unmatched flexibility.

Also, the framework can be leveraged for a range of purposes such as warehousing data, recommending systems, processing logs, analyzing market campaigns, and detecting fraudulences within a system. Due to its multitasking abilities, this framework is admired for its flexibility among different corporate houses.

These benefits are even new to some of those organizations having matured processes. So if a business needs to experience true benefits of data processing, it will have to work with Hadoop. All those enterprises that are still new to this framework should leverage Hadoop consulting services. Such consulting and development services will let companies use this infrastructure to build a safe data-management environment.

admin

admin